When designing a software solution, ‘micro-services’ is one of today’s buzz words with which you need to be compliant to be cool. But what is a micro-service and what is the size of a micro-service?

As with every topic on application architecture and software engineering, a good source of inspiration are the blog posts from Martin Fowler (http://martinfowler.com/microservices/). In his definition, a micro-service:

- is running in its own process,

- is built around business capabilities,

- is independently deployable.

Another statement says that one micro-service should be limited to one bounded context in a DDD approach. I can imagine that enterprise architects love such a statement. But before we know it, we can fall into the pitfall of defining an upfront, enterprise wide micro-service catalogue. The same pitfall which has caused so many SOA projects to get stuck in endless catalogue discussions.

So, while this is all very relevant, does it really help us in our quest for the micro-services that we need to solve our problems? Rather than trying to define what a micro-service should be, the maximum lines of codes required, or just how many pizzas you need to feed the team making it, let’s start at the beginning of the problem that we try to solve. Today, companies and especially the traditional banks and insurance companies, are all facing the challenge of how to survive in the new digital economy. Their long-time dominance is being attacked from all sides by predators, both large and small, and none of them are playing by the established rules!

To stay competitive, the incumbent players must create new and compelling, personalized services very quickly and very frequently. And these solutions must be flexible and easily adapted to changing market or economic needs.

Another important consideration is the load that these offerings are putting on the existing IT systems. The services are being driven by new generation apps, and in the future by all the intelligent things that will surround us. The expected loads are hard to predict so therefore it is vital the overall solution must be easily scalable both up and down. These are not the only non-functional requirements, but they already give structural guidance in our search for micro-services.

Now Let’s look at scalability. A good scaling strategy starts with a good scalable model. How do all the parameters that drive the solution architecture evolve with the desired throughput? This is a very difficult exercise. In traditional software architectures, the solution is split in multiple tiers, each one specialized in a specific, often technical, aspect. A four-tier architecture, consisting of a front-end tier, an application tier, a data tier and a messaging tier which has been, and still is, very popular. In this type of architecture, the mapping of the functional throughput to the technical throughput is a daunting task: How many database transactions will be fired (taking possible batching into account)? How much additional memory/threads is needed? How many messages – and how do these then in their turn result in database transactions?… Performance tuning in such an architecture is often limited to trying to achieve a single throughput target without taking into account other changing conditions.

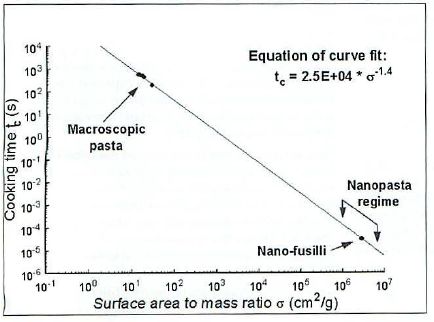

Scaling such systems is often akin to scaling a recipe to satisfy the needs of twice the number of guests turning up unexpectedly at your door. You know that you will need to roast twice the weight (amount of food) to feed the hungry, but how will this change affect the overall cooking time? Can you still guarantee that dinner will be served on time, and still provide a perfect result? Do you now need twice the time to bake the roast that’s now double the size? What would happen if you cut it into two? But can you roast both pieces in the same oven? Or will you have to turn up the heat? And will the end result still be the same? And once you have figured this out for the roast, do you then apply the same rules to cooking the fish?

Figure 2. Rocket science pasta cooking

A scaling model which must translate functional parameters into technical parameters requires an extremely deep understanding of the implementation. This is no simple task. Frankly, I have not encountered a system where this was possible because generally, you don’t have all the information needed to make this important call.

It’s much simpler to make a sizing model by limiting the exercise to business functions. For example, on the first weekend of the sales, one can typically predict there will be an increase of 50% in payments at the POS. So, to calculate the expected increase in digital signatures, and in turn the number of underlying payment transactions is not rocket science.

A simple scaling model is a good driver to let a micro-service coincide with a business function. But a business function does not equal an end user interaction, which nowadays generally is called “an API”. A business function might involve multiple user interactions. Actually, in the financial service industry, this is most often the case.

Figure 3. DLL Hell

Now let’s look at the ability to quickly create new business services and enable them to continuously evolve. A crucial aspect of this is to control and manage the different versions of the services. You can split a business function into finer grained services, but don’t go too far. If you go too fine grained you may end up with lots of low-level dependencies and then,…..say hello to dll hell 2.0 (Or is it 3.0, dll hell 2.0 being the continuous fight with the conflicting versions of the Xerces parser in J2EE servers). You cannot force all the services to be automatically upgraded when an underlying service evolves. This is both business wise, and operationally, not possible. This means that you have to support the coexistence of multiple versions of a service. And this is not always obvious if they are too small.

Fast and easy scaling, together with continuous and controlled change are two drivers needed to constrain micro-services to business functions which can be well defined and where the interactions are clearly mapped out.

And of course, this post could have been much shorter as the answer is simply 10^(-6).